REF 2029: a framework for a Public Knowledge Service

REF 2029 could enable radical progress towards a more inclusive, open and engaged sector. Let’s not waste this opportunity, argue NCCPE Co-directors Sophie Duncan and Paul Manners

When the new framework for the REF was announced in June 2023, the NCCPE team were delighted.

It invited a radical rethink of the way the sector organises research: to move from a paradigm where traditional research production dominates to a knowledge ecosystem in which engagement, environment and the production of knowledge are understood as completely inter-dependent.

Put more pragmatically, it was an invitation to re-think how to ensure the 10s of billions of pounds of public money that will flow into universities in the next 10 years will be spent as wisely as possible.

But it also raised concerns for many in the sector. In particular, the move to increase the weighting of the research environment from 15% to 25%, and how this element could be assessed robustly. It also ruffled ‘culture war’ feathers, prompting accusations of ‘science gone woke’.

This is the context for the latest announcements from the REF Steering Group who are delaying the REF for a year and initiating a pilot to test the feasibility of the new proposals (just as they did when Impact assessment was controversially introduced in 2010). In parallel, they have commissioned a project to develop and test indicators that might be used in the new assessment of People, Culture and Environment.

Three focal points for the year ahead

We are looking forward to contributing to both the indicators project and the pilot, to feed in lessons learned in the public engagement community.

So how can all of us working to improve research culture work together seize this opportunity?

The NCCPE outlined some first thoughts about the way ahead in the submission we made to the consultation on PCE last December.

1. Clarify the challenge: ask why

In our view, the challenge is how to organise the sector to deliver the highest quality ‘Public Knowledge Service’ possible (with a respectful nod to the NHS). This is something the NCCPE and our many collaborators have sought to understand since we were founded in 2008. This involves stepping back from academic and technical debates about ‘what is research culture’ to questions about strategy and purpose as knowledge institutions.

In doing this, we argued strongly that we need to draw inspiration from how other sectors – health, business, charities – have sought to continually improve the quality and public benefit of their work and to demonstrate accountability and transparency. Given that assessing organisational culture is hard, learning lessons from other sectors, with sophisticated approaches that have been evolving for many years, could help.

For example, the Donabedian model used in health to assess and compare the quality of health care organizations provides a helpful framework for defining the determinants of a well-run system:

Structure dimensions | Structural measures describe a provider’s capacity, systems, and processes to provide high-quality care [or research]. |

Process dimensions | Process measures indicate what a provider does to maintain or improve health [or research]. These measures typically reflect generally accepted recommendations for effective practice. |

Outcome indicators | Outcome measures reflect the impact of the service or intervention. In health, that captures the impact on patients. |

This framework could really help – and the focus on ‘process’ dimensions could also help us to develop the proposed measure of ‘rigour’ in assessing Engagement and Impact.

2. Define the territory: ask what

A second basic question is to ask ourselves exactly what is in scope. What ‘business’ are universities in when they spend public money on research? What are the core purposes of the ‘Public Knowledge Service’ they are part of? Clarifying this is essential if the assessment system is to focus on the right questions.

The Science Europe Values Framework identifies four purposes for research. Assessing People, Culture and Environment could then be asking – what investments and interventions are you making to enable each of these to operate in an optimal way? And for each, what ‘structure’, ‘process’ and ‘outcome’ measures could be evolved to provide useful intelligence on how well they are working?

Science Europe Research Culture Framework (2022)

|

3. Avoid checklists and tick boxes: ask how

Finally, the REF 2029 guidance proposes ‘a more tightly defined, questionnaire-style template that will create greater consistency across submissions and focus on demonstrable outcomes’.

At the NCCPE we worry that this could shortcut the developmental power of a well-designed assessment system. Checklists or tick boxes have their place – but an approach that invites self-reflection and learning and provides a scaffold to enable robust judgements to be made, and which is sensitive to the diversity of the sector, would be much more valuable.

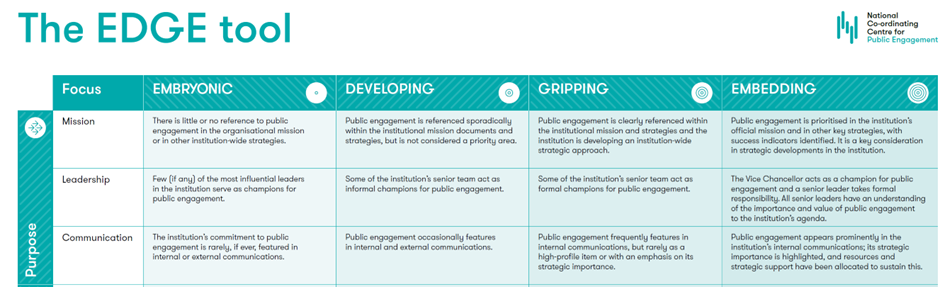

The NCCPE has spent 15+ years developing approaches to supporting strategic approaches to culture change and we have settled on an approach used in many sectors to support organisational development: a maturity model.

We used this approach to develop a tool to help universities assess their support for public engagement, based upon a robust analysis of the determinants of a mature environment. We are confident that this kind of approach could be developed for research culture as a whole, to provide a robust representation of the factors that need to be taken account of in developing and assessing a strategic approach.

A snapshot of the NCCPE’s EDGE tool

All to play for

It’s a challenging time for the HE sector. As the economic pressures mount, competition between HEIs intensifies, and claims of ‘wokery’ circle around this work, the temptation to act defensively and to take short term measures could dominate.

Let’s not waste the opportunity the REF offers to step back, think long term and work collectively to ensure our contribution to knowledge and understanding continues to evolve and meet the needs of society.

References

- Donabedian, A (2005) Evaluating the Quality of Medical Care, The Milbank Quarterly, 83(4):691-729

- Raleigh, VS and Foot, C (2010) Getting the Measure of Quality: Opportunities and Challenges, London: King’s Fund

- Maturity Models: https://en.wikipedia.org/wiki/Maturity_model